A young Montreal patient has never forgotten her recent visit to the emergency room, when she found herself alone in...

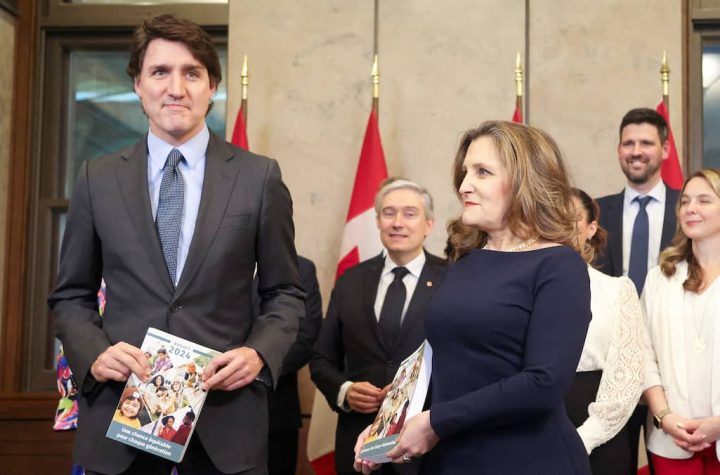

Tuesday's budget was seen as an opportunity for Justin Trudeau's Liberals to return to political glory. However, it was a...

Asian cuisine fans, I have a new brand for you to discover in Montcalm, serving brunches and Japanese-inspired dishes. The...

CBC/Radio-Canada will cut fewer positions than expected this year, thanks in particular to new state funding, management announced Wednesday in...

(NEW YORK) Car maker Tesla will once again present to its shareholders, at its next general meeting in June, its...

Six years ago, Simon bought a mobile canteen for $110,000 and his father agreed to act as guarantor for the...

Names, first names, contact details, information on banking products... Around ten customers of the National Bank (BN) were "affected by...

Although tax hikes for the wealthy are expected to be announced in the federal budget on Tuesday afternoon, Mario Dumont...

Published 04/15/2024 22:36 Video Length: 2 min Energy: Are Solar Panel Kits Really Profitable? Energy: Are Solar Panel Kits Really...

Living in Montreal with two kids without a personal car is "yes, it's possible," Valérie Plante argued Monday, announcing the...