Published 04/26/2024 4:08 pm Video Length: 1 min Restaurants: The suggested tip may not appeal to all consumers Restaurants: The...

Unlike the rest of Canadians, Quebecers have gotten richer during the pandemic, according to data released by the Institut de...

Abolished for six years in the United States, net neutrality finally marks its return: the American Telecommunications Authority decided on...

(Montreal) Bombardier reported lower profits and revenue in the first quarter, but said its order backlog grew compared with the...

Host Paul LaRocque raised his glass to the quality of the discussion among the panelists on the show The Joust...

This page will probably be the last in my notebook. As a secondary school teacher, I had the enormous privilege...

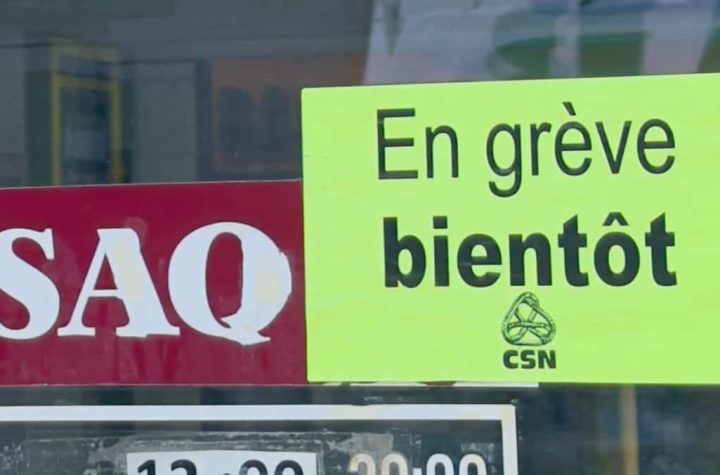

The store and office workers union of the Société des Alcohols du Québec, which represents about 5,000 workers in the...

The number of service disruptions on Réseau Express Métropolitan has increased with the arrival of colder weather, according to new...

All indications are that the Société des alcools du Québec (SAQ) will have to operate without employees on Wednesdays and...

For many people, eating a glass of milk, grain products, or certain fruits and vegetables can cause food intolerances such...